This post looks at using square bracket notation addressing to obtain elements from map columns, array columns and complex columns made out of maps and arrays.

If you have been using structured data columns in PySpark for a while then you will know that it is possible to use conventional python square bracket addressing to extract elements from them like python lists and dictionaries using commands like:

import pyspark.sql.functions as fn

# extracting data from a map (dictionary) column

df = people_df.withColumn("new_col", fn.col("map_col")["map_key"])

# extracting data from an array (list) columnn

first_car = df.withColumn("new_col", fn.col("array_col")[0])

However suppose you have a more complex situation. Specifically you have an array column with is an array of maps (a list of dictionaries in python terms). It turns out that there is a simple way to extract all the given map values which share a key to a new array column.

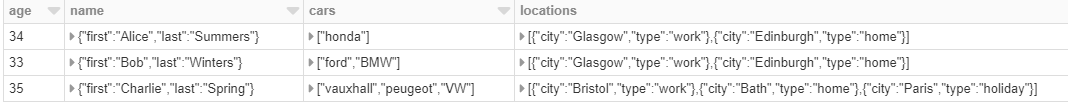

Suppose we have the following data frame

To extract the “city” key from the first element of each locations array we would simply need:

# extracting a single item from an array of maps

df = df.withColumn("first_city", fn.col("locations")[0]["city"])

However what if we wish to extract all the cities to a new array column? Well this is quite easily done. All we have to do is address the maps inside the array directly as follows:

# extracting data from an array of maps to a new array

df = people_df.withColumn("cities", fn.col("locations")["city"])

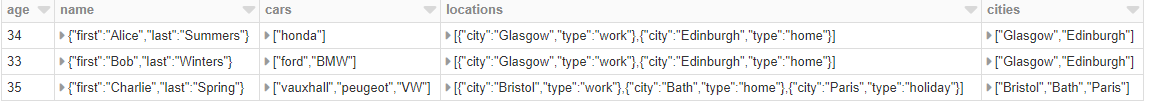

Somewhat surprisingly given that we did not address the array at all this works and we get:

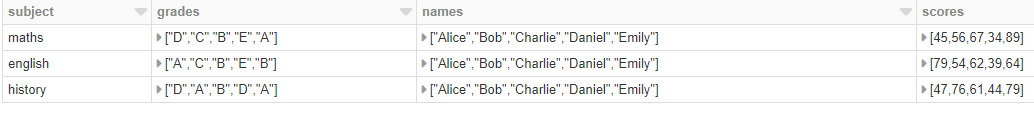

Note that we cannot pull this trick the other way round. If we have a map of arrays like so:

While we can pull out specific information as before, we cannot pull out the first student’s results with direct addressing in this situation since we get an error message. This seems to be because this way round the column is interpreted as a struct not a map

# we can pull out a specified value from a map of arrays to a new column

grade_df = df.withColumn("one_grade", fn.col("performance")["grades"][0])

# however we can't easily extract all first elements from a map of arrays

extracted_df = df.withColumn("one_student", fn.col("performance")[0])

# this gives an ERROR!

However a trick that does work in this case is to use * addressing. This separates our map of arrays into separate columns for simpler processing.

# using .* to extract a struct column to separate columns

exploded_df = df.select("subject", "performance.*")

This gives us a nicely split up set of columns which we can then onward process like so:

A work book containing examples of this behaviour can be found on Github. You will need to download it and reupload to Databricks Community Edition to run it.